The $350 Billion Bet Against Human Labor

Consumer subscriptions can't justify trillion-dollar AI investments. The real business model is workforce replacement at scale—and this reshapes who holds power in the economy.

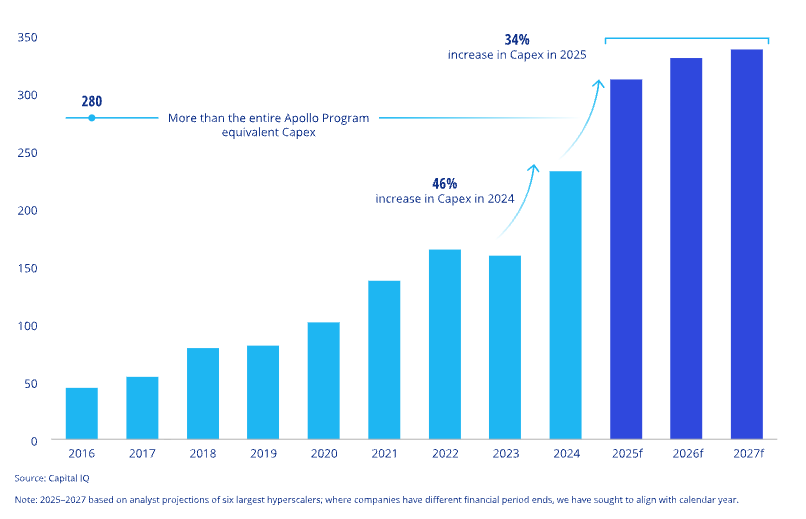

In 2025, we're witnessing infrastructure investments of a scale not seen since the buildout of the electrical grid or the interstate highway system. Hyperscalers and major AI providers are pouring roughly $340 billion into AI compute this year alone. Add mega-programs like Stargate (up to $500 billion) or Meta's massive cluster buildouts, and annual investment pushes well beyond $350 billion.

These numbers are so large they become abstract. So let's make them concrete.

The Math Doesn't Work for Subscriptions #

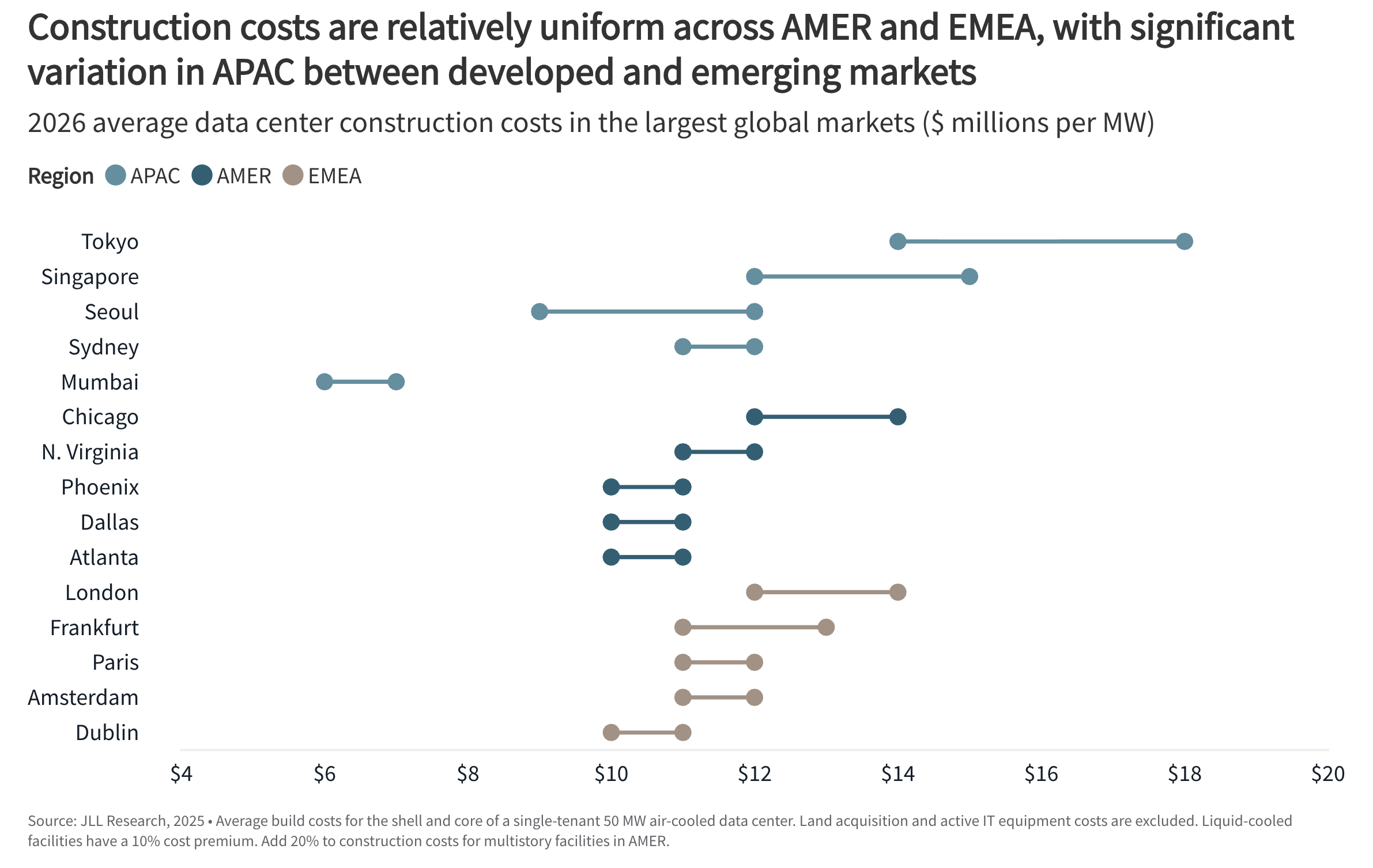

For every $10 billion spent on AI infrastructure, approximately one gigawatt of compute capacity comes online1Between 2020 and 2025, the average global data center construction cost increased from $7.7 to $10.7 million per MW, equating to 7% CAGR. For 2026, JLL is forecasting the average global cost will increase 6% to $11.3 million per MW (from JLL report).

In the United States, powering one gigawatt of compute costs roughly $350 million per year2The price per MWh varies significantly by region. The estimated total of $350 million is based on an assumed average U.S. wholesale electricity price of $40 per MWh in 2025, as projected by the U.S. Energy Information Administration in electricity alone. At $350 billion invested, we're looking at $12 billion in additional annual electricity costs—before accounting for hardware depreciation, cooling, maintenance, or staffing.

This level of capital and operating expenditure cannot be recouped through $20/month consumer subscriptions. Covering electricity costs alone for the planned $350 billion in new data centers would require approximately 50 million additional paying subscribers, year-round. That equates to roughly one in six U.S. residents subscribing to ChatGPT Plus or an equivalent service.

Priced Like Labor, Used as Labor #

Consumer subscriptions won't sustain these investments. Two paths remain: government and defense spending, or enterprise contracts priced at $1,000–10,000/month—like wages, not apps. The first raises questions beyond this piece. The second makes workforce replacement not an accidental outcome but an inherent condition of adoption. Systems priced like labor will be used as labor.

These investments are explicitly a bet that AI will replace workers at scale. This is the goal, not a side effect—even when dressed in philanthropic language about "AGI benefitting all of humanity".

The societal implications of replacing large segments of human labor deserve far more extensive treatment than this piece allows. Here I'll focus on the economic dynamics such a transformation would entail.

If AI replaces labor at scale, access to compute becomes access to workforce. The corporate landscape splits: firms with direct compute access, and firms buying productivity-as-a-service from providers. Today's cloud dependence is about reducing infrastructure costs. This would be buying output itself—at multiples of human capacity. The concentration of economic power would be immense. Big Tech's control could extend to orders of magnitude more effective workforce, deployable internally or sold to others.

This is the fear behind "AI eats software." But the premise deserves scrutiny.

Why Models Alone Won't Get There #

This concentration of power feeds the "AI eats software" narrative. Model providers won't just have better tools—they'll have substantially more workforce. But the reality is more complicated.

Everyone working with AI agents—especially beyond coding—knows we're not there yet. AI cannot handle the vast majority of business tasks out of the box. The age of large-scale workforce replacement hasn't arrived, and it remains uncertain when it will. Progress even seems to be slowing, with pure model scaling showing diminishing returns.

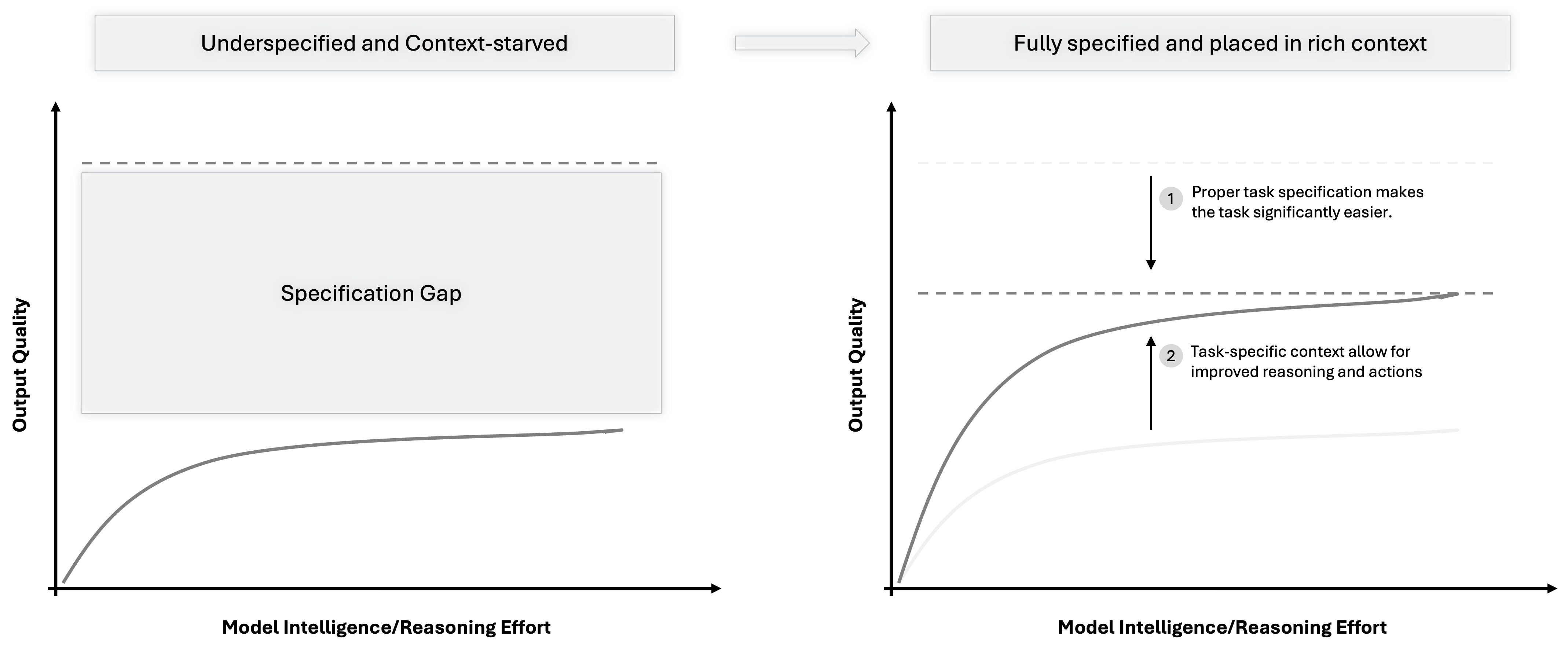

But here's the thing: for many use cases, model intelligence is no longer the bottleneck. Solving any task requires three things:

- Task specification—what exactly needs to be done

- Task-specific context—the relevant information and tools for this situation

- A capable solver—the model itself

Better models improve (3), and partially (2) through stronger reasoning. But most of (2), and all of (1), cannot be solved by improving models alone.

This explains why benchmark performance and real-world performance are diverging. GPT-5 can match gold medalists at the International Mathematical Olympiad. Yet it struggles with routine agentic tasks in business contexts. Why? Math problems are fully specified and require no local context. Real-world problems are the opposite: under-specified and context-heavy.

Who Controls the Context Controls the Value #

To refinance these investments, AI companies need use cases where they can replace human work at scale. So far, model providers have found a limited number: mainly software engineering and deep research. Consumer revenues help but won't suffice.

Every business process is a potential AI use case. Individually, these processes may be smaller than coding or research. But in aggregate, they dwarf them—approximately 60% of U.S. workers are knowledge workers, yet fewer than 2% are software developers. An inevitable push into B2B processes by all major AI providers is coming.

But conquering these processes requires something model providers don't have. The systems that run payroll, supply chains, customer relationships, and financial operations hold:

- Task-specific context, embedded in existing business systems

- Domain expertise, accumulated over decades, which helps close the specification gap

This is why "AI eating software" isn't inevitable—it's a battle. If compute becomes the new workforce, then context and domain knowledge become the chokepoints that determine where value accrues.

Software companies that recognize this have a clear strategic path: protect access to context through appropriate controls rather than open pipelines to AI providers; systematically capture domain knowledge in structured, machine-usable forms; and invest in understanding how business processes actually work, in enough detail that AI can execute them reliably.

The companies that play these cards well won't be eaten—they'll become the indispensable layer that makes AI actually useful. The question for enterprise software isn't whether to become compute players—the capital requirements are prohibitive. The question is how to remain essential in the value chain, and extract value accordingly.